Synthetic Environments Engine

Overview

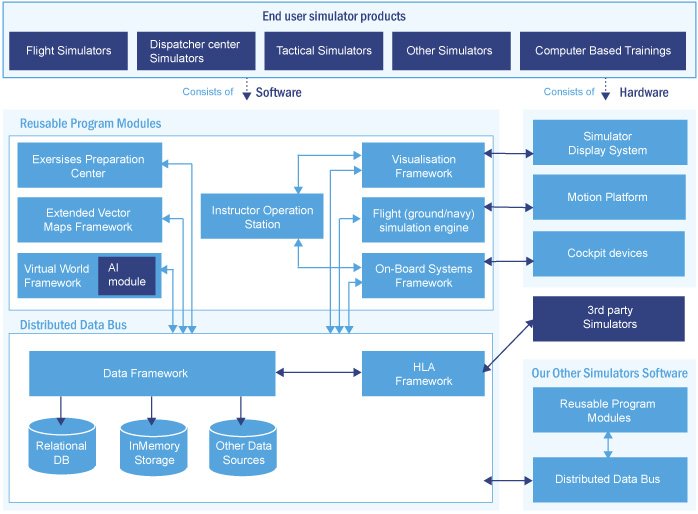

We have created Synthetic Environments Engine that models a virtual world with terrain, cultural objects, ground and atmospheric environment and different types of units controlled by AI that is used in different simulators, such as Full Mission Simulators (FMS), Staff Officer Tactical Trainers, Command & Control Simulators.

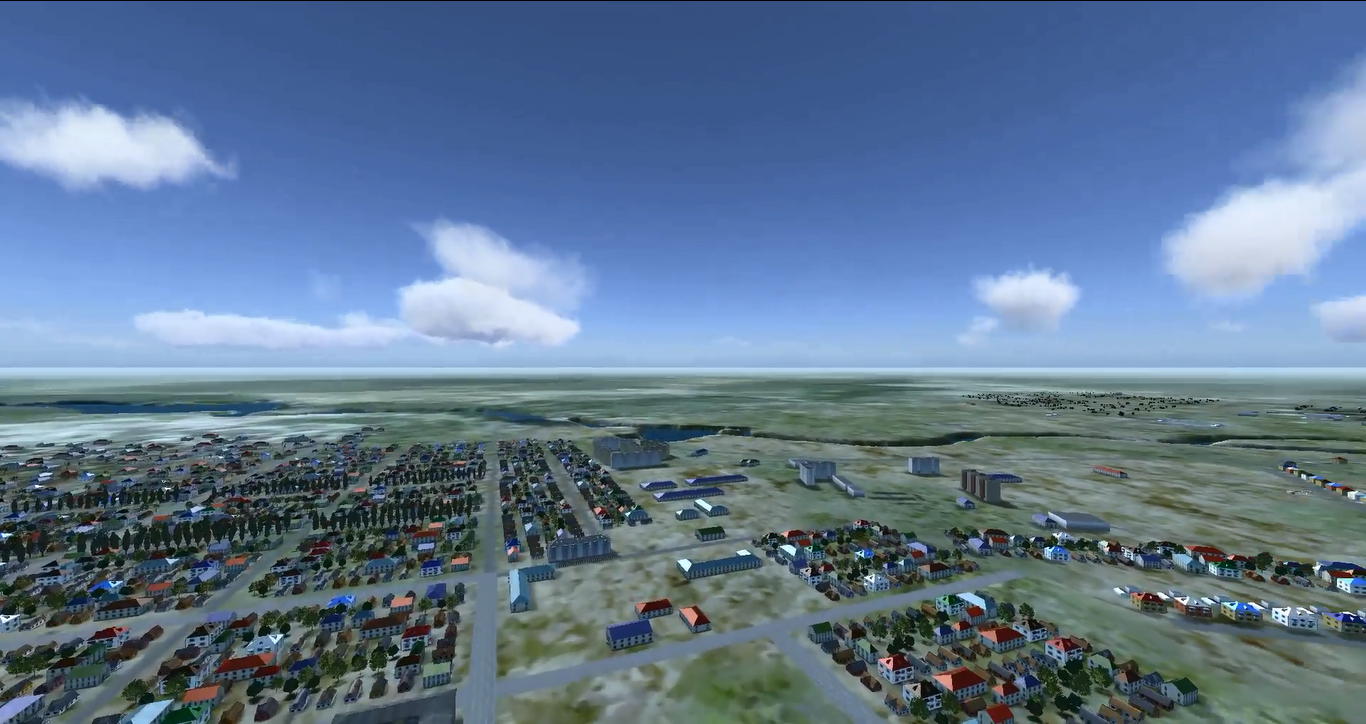

Here you can see restoration of one of real-world city using digital vector maps, terrain imagery, 3D models library and SRTM elevation map. You can see a roads, river in the top-right, city blocks and bush / tree areas that are generated automatically from correspondent vector map. Airport environment in bottom left was added manually

High performance PhysX engine (developed by nVidia) is used as the basis of the virtual world. This is a battle proven technology used in the most of current modern games. This technology allows us to simulate huge environments with big amount of interacted static and dynamic objects, with collision detection and processing, line-of-sight check and other physical engine possibilities.

Here you can see in 3D attack of mechanized infantry as part of Command & Control Simulator. This formation is led by Artificial Intelligence that is part of our Synthetic Environments solutions

Usage of PhysX allows us to process tens of thousands units without CPU usage, as this technology allows us to work directly with GPU (Graphical Processor Unit on PC graphic card). So, all units data are loaded into PhysX for processing units interactions using GPU.

Here you can see AI logic that is used for infantry behavior modelling. Infantry try to reach the mission task: attack. So, they move by bounds, and laying down after few steps, to be less targattable for enemy units. They try to optimize the ammunition as it is limited and shoot only when target is in shooting range and can be damaged by current weapon and ammunition type. When there is a hill slope, soldier don’t lay down in the grass, but sitting on his knee.

Here you can see modelling of flight of airplane and helicopter due to their mission tasks and flight dynamics that is calculated due to mission points

Here you can see visualization of formation fight. Blue forces has the orders to attack, red forces – to defense. You can see giving real time orders for blue forces to attack with moving into position and you can see an order for red forces to march to new positions. Under the map there is virtual world where each unit is modelled simultaneously and it acts due to mission tasks led by artificial intelligence.

Main features of the technology are:

- Real-time simulation of the real world, including moving and combat actions of different unit types

- Correspondence of the a virtual world to some real world area. Elevation map, terrain imagery, digital vector maps and 3D models typical for region are used to perform it. Random terrain generation abilities

- Thousands of simulated units in one virtual world

- Distributed synchronized world that allows different users to work with the same world simultaneously

- Integrated physics, including collision and damage processing. nVidia PhysX is used for high performance of such calculations

- Rich logic of a simulated world, including bullet and shell ballistics depending on weather conditions same to ammunition and weapon characteristics, different parameters of unit that are taken into account during simulation process, trafficability etc. Each unit acts depending on its characteristics using physics principles. All characteristics can be changed through characteristics editors and behaviour will change based on the new characteristics.

- Graphical editor of starting positions and mission tasks for unit and formation during simulation process

- Support of any amounts of viewpoints, including different 2D (paper scan-based map, digital vector map etc.) or 3D views

- Rich AI (Artificial Intelligence) that makes decision for each simulated unit (AI features are described precisely below)

The core of technology

This technology is a timer-based distributed calculations technology with network synchronization. Each time frame in the next state of a virtual world is calculated and the model changes due to a new state of the virtual world. Next world state is calculated based on AI logic and orders to units are made by mission editors or real-time. For example, with a new timeframe some unit may move, some may make some shoot, some may explode etc.

Of course for network and FMS simulators synchronization between different simulators and their parts is necessary. All the PCs that take part in simulation calculate the virtual world simultaneously with the same algorithms using dead reckoning process (http://en.wikipedia.org/wiki/Dead_reckoning). So, each computer in the simulator uses the same virtual world engine module to be synchronized with the network. As there is human factor (for example, the pilot of a helicopter has opened fire with a machine gun and damaged some unit etc.), synchronization of such events should be made to each computer to change the simulated world flow. For this purpose there are synchronization points that happens lots of times per second but more rarely than timeframes to reduce network traffic and increase simulated world performance. With each synchronization point the current state of simulated units is transferred between networks the same as new orders that have been made by simulator users (pilots in case of flight simulators, officers in case of Command & Control simulators, dispatchers in case of air traffic controls etc.). Of course, changes to the virtual world made by human have the first priority for making states synchronization.

Thus, we can create virtual worlds that can be simultaneously modelled on different computers of different simulators in real-time with synchronization between them.

Correspondence to real world

As our world is created from real world digital vector maps and elevation data, environment of this world is correspondent to some real world, including 3D visualization and land types. This allows reaching maximal reality of trainings before the same training or actions in the real world. Please refer to sections Vector maps based world generator, Relief modelling framework and 3D Visualization Framework for additional information.

Here you can see restoration of one of real-world city using digital vector maps, terrain imagery, 3D models library and SRTM elevation map. You can see a roads, river in the top-right, city blocks and bush/tree areas that are generated automatically from correspondent vector map. Airport environment in bottom left was added manually.

Here you can see city blocks automatically generated from digital vector map data using typical for this region 3D models. Roads are automatically generated too due to digital vector map.

Here you can see relief of terrain that is used in model. This relief is taken from SRTM elevation data and corresponds to terrain in this region.

Cultural objects are added to map using 3D models developed by our team

Huge virtual world support

Due to performance optimizations our world can support thousands of units that act independently in this virtual world. This is extremely useful for a Command & Control simulator on a battalion or brigade level or for simulation of a big area of combat for FMS.

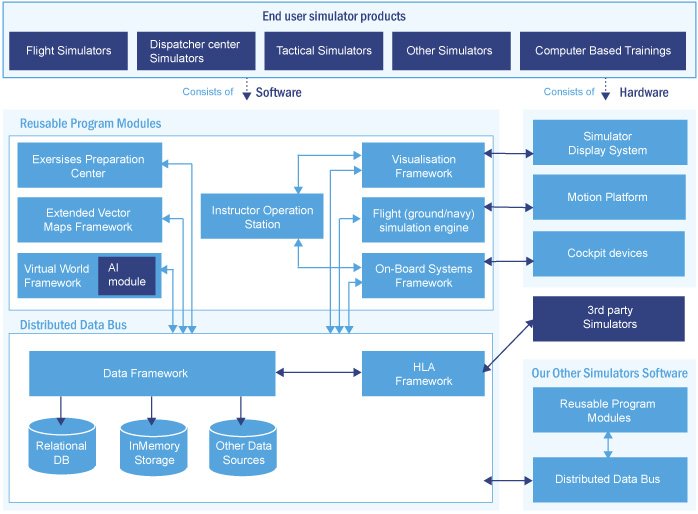

Automatically synchronized distributed model

Distributed model of a virtual world that is shared between all the computers in simulators is supported by HLA protocol. The model is stored in Plasticine (our data framework) data structures. That means that everything is synchronized automatically. Simulator developers do not have to know anything about HLA, they can just develop software and all synchronization will be done transparently for them.

For example, a developer implements logic of movement of a helicopter. Once the position parameters of the helicopter are changed inside the virtual world model, this data will be synchronized between all the computers by engine automatically. The developer should not develop triggering of HLA events or implement any synchronization code.

This automatic synchronization capability leads to drastic increasing the speed of development simulators, as the developers just develop the behaviour of entities, and do not have to write any infrastructure code for synchronization.

Integrated physics

Inside the virtual world physics is integrated. nVidia PhysX engine is used as physics engine. It is used, for example, for collision detection, e.g., when two helicopters clashed as they were too close to each other or when a bullet collide with a soldier after shooting etc.

This physics engine allows to detect impacts automatically and as a result destroy some units and move out them from the virtual world as a unit.

This high performance PhysX physics engine allows us to process tens of thousands of units due to using GPU (graphical processor on video card) instead of CPU.

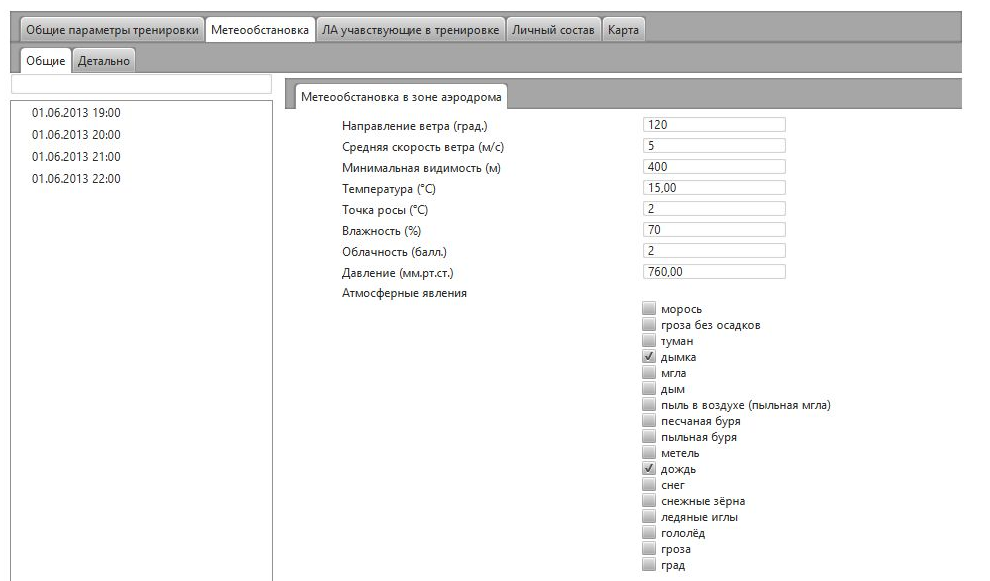

Rich logic of simulated world

Many properties of units are taken into account in the simulated world. For example, ballistics of a bullet or shell depends on weather conditions and ammunition parameters as defined by properties editor and, also, depends on the parameters of the weapon that has shot this bullet. The bullet can be shot only from an appropriate weapon, trafficability of the machine depends on track diameter, the amount of soldiers on-board depends on the correspondent parameter in parameters editor etc.

Lots of things are taken into account (and this is extendable) in simulation algorithms: parameters of a unit, atmosphere, relief, time of a day etc.

Here you can see in 3D attack of mechanized infantry as part of Command & Control Simulator. This formation is led by Artificial Intelligence that is part of our Synthetic Environments solutions

Here you can see AI logic that is used for infantry behavior modelling. Infantry try to reach the mission task: attack. So, they move by bounds, and laying down after few steps, to be less targattable for enemy units. They try to optimize the ammunition as it is limited and shoot only when target is in shooting range and can be damaged by current weapon and ammunition type. When there is a hill slope, soldier don’t lay down in the grass, but sitting on his knee.

Here you can see modelling of flight of airplane and helicopter due to their mission tasks and flight dynamics that is calculated due to mission points

Here you can see visualization of formation fight. Blue forces has the orders to attack, red forces – to defense. You can see giving real time orders for blue forces to attack with moving into position and you can see an order for red forces to march to new positions. Under the map there is virtual world where each unit is modelled simultaneously and it acts due to mission tasks led by artificial intelligence.

Customization of start positions and unit mission tasks

You can set start positions for each unit and give them tasks for their mission in graphical mode. These tasks are processed by AI engine that makes simulated units do their behaviour to complete mission tasks.

When setting the starting positions, relief, land types and environment objects are taken into account. For example, you can not position a tank on a building or on flank of a mountain with an angle bigger than it has been set in properties editor.

A computer-generated force mechanism can also be used to generate whole formations with them automatically positioning with taking into account terrain properties.

Different views of simulated world

On-map situational view

Far distance 3D view

The world is distributed between machines in the network. So, as this world uses HLA, different viewpoints can be attached to this virtual world. 2D and 3D screens of a trainee, instructor screens, cockpits screens etc.

Extendability

Virtual world engine has been made with maximum extensibility because great attention was focused on projection stage. So, all the points described above are extension points of richer logic.